| Reverent Entertainment → Ecclesiastes 9:11 → Scientific journals rejected published articles, resubmitted in disguise |

I returned, and saw under the sun, that the race is not to the swift, nor the battle to the strong, neither yet bread to the wise, nor yet riches to men of understanding, nor yet favour to men of skill; but time and chance happeneth to them all.

Ecclesiastes

9:11

Back in 1980s Douglas Peters of the University of North Dakota and Stephen Ceci of Cornell University decided to check if acceptence or rejection of a scientific paper by a leading journal has anything to do with the quality of the paper. They selected 12 most prestigious American psychological journals which accept only 20% of submitted papers. They selected one paper from each of the journals published between 18 and 32 months earlier. They took care that the authors of the papers were from the most prestigious universities in the United States. They substituted fictitious names and institutions for the original ones. Slightly changed titles, abstracts, and few first paragraphs of the introduction. And submitted modified papers to the very same journals they had been published few years before. Peters and Ceci reported the scandalous results of the experiment in the journal Behavioral and Brain Sciences[1].

Only three of the twelve journals detected a resubmission. Of the nine remaining papers one was accepted and eight were rejected. The papers were rejected not because they reported something already known. The reviewers found problems with the methodology and statistical analysis. Here are the examples of referees' criticisms:

I do not think that this paper is suitable for publication; further experimental work would be required.

It is not clear what the results of this study demonstrate, partly because the method and procedures are not described in adequate detail, but mainly because of several methodological defects in the design of the study.

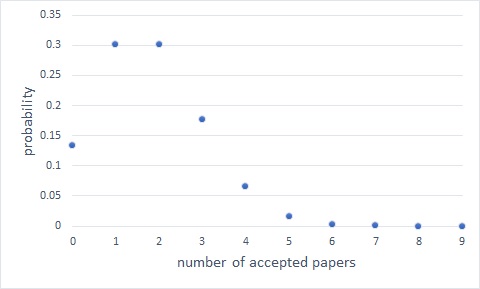

Peters and Ceci concluded that their experiment shows that the editors and referees are biased in favor of the authors from prestigious universities. However Andrew Colman from the University of Leicester had challenged their conclusion [2]. He argued that the results of the experiment are consistent with journals accepting papers at random. Colman computed the probabilties of accepting n out of nine papers with a 20% acceptance rate using a Binomial distribution. For readers' convinience I present his results in the form of a plot:

As you can see the outcomes with one or two accepted papers have the highest probability: 30%. So the actual outcome of the experiment is one of the two most likely otcomes predicted by the model of randomly-accepting editors. Mathematical probability, not prejudice. In another study a model of random-cting scientists accounted for the empirically observed distribution of citations.

In a series of related experiments, publishers rejected literary classics, submitted as works of unknown authors. In another study, the experimenters presented an actor as a renowned Psychologist. He went on to read a nonsensical lecture. The audience of Ph.D. Psychologists did not notice anyhing wrong.

Mikhail Simkin

February 7, 2018